May 16, 2019 Politics

As the Prime Minister discusses freedom of speech in Paris, Metro’s editor Henry Oliver asks: Who should be allowed to decide what’s beyond the pale?

I became acquainted with the internet and fascism around the same time — third form, 1994. There were cafes in Wellington where you could pay by the quarter-hour to access the “world wide web”. My dad drank coffee while I’d chat about music and comic books with Americans.

In my year at school, there was a boy with cropped red hair, who by fourth form would become a full self-identified skinhead. He shaved his hair even shorter and drew swastikas on his pencil case in vivid. He got in trouble a bit, for being disrespectful to teachers and getting in the occasional fight, but not, as far as I can remember, for the swastikas. We used to argue about politics in class. He thought maybe the Nazis were misunderstood. Said they got the trains running on time, that they fixed Germany’s economy, stuff like that.

I understood freedom of speech as an inherent and universal good. The only cure for bad speech (or even hate speech), my white, liberal, child-of-two-teachers’ “common sense” told me, was more speech. In the marketplace of ideas, the best, most rational ideas would win. Teenage Nazism was a position someone could be argued out of.

The skinhead probably never got in real trouble because it all seemed a little silly. The school probably considered him a troubled teen with an interest in an objectionable sub-culture rather than someone who would ever pose a real threat. And at the time, the internet seemed like a utopia of more speech. There was room for everyone to pursue their own interests without impacting others’ ability to pursue theirs. In fourth form, I wrote an essay about how the internet was an anarchist utopia, where everyone was to be free — like really free, not just representative democracy free — without rules or governance or hierarchies or commerce. On the internet in 1995, it seemed like we could all get along, and not just get along but actively contribute to the project of human progress.

We were wrong on both counts.

The skinhead left school after fifth form. Apparently he got arrested a few years later for beating up someone he and his friends deemed unworthy of making New Zealand their home. And 25 years later, the internet is largely controlled by a small group of monopolistic companies who, among other things, profit off the personal data you leave in your trace as you use their free, advertising-driven services.

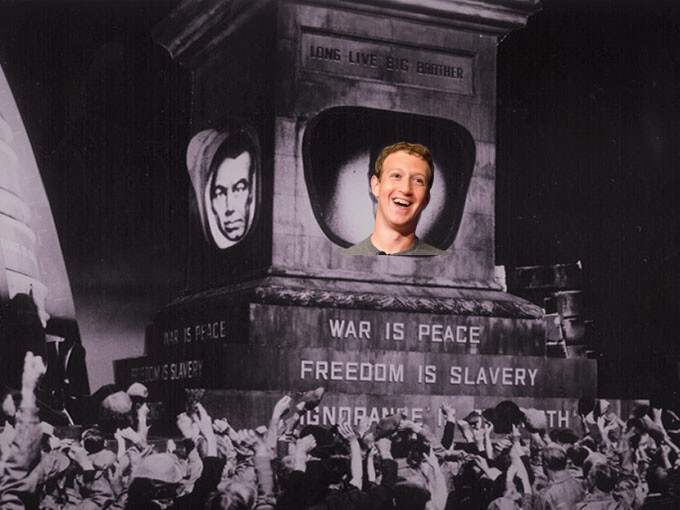

To gather as much data as they can and then sell it to other companies while also selling products to you, these companies require as much of your attention as possible. And what’s the best way to keep your attention? By balancing comfort and novelty. By confirming your biases while also exposing you to increasingly emotive content. Watch any video on the most slightly controversial topic on YouTube and see how its recommendation algorithm immediately takes you further and further beyond the outer reaches of acceptable speech. Christopher Hitchens leads to Sam Harris leads to Jordan Peterson leads to Ben Shapiro leads to Candace Owens leads to Alex Jones leads to the kinds of people who sparked the imagination of my third-form classmate.

In the age of the algorithm, the marketplace of ideas is not just morally bankrupt, it’s actively leading us to shitposters, flat-earthers, white nationalists and everything truthers. Good ideas, we’re learning quickly, aren’t valued by the market as highly as provocative ones. Facebook and Google can know more about us than we know ourselves and use that knowledge to give us an unending supply of all sorts of ideas we didn’t know we wanted. A “good idea” can’t compete.

Still, it may be my demographically privileged liberal upbringing — more speech, conveniently, favours those whose voices have historically had the greatest amplification, while many who technically have free speech have little chance of being heard, or face severe consequences when they are — but the idea of any government having control of what can be said, beyond something pretty close to our current criminal and civil law, scares the shit out of me. (We’ve all seen the kind of people getting elected in some parts of the world.)

But if we aren’t going to shut down the worst ideas ourselves, if we aren’t going to make it not just socially unacceptable but socially reprehensible to spread bigotry and hatred and violence, and if we aren’t going to force technology companies to take some semblance of responsibility for the bad ideas published and promoted and broadcast on their platforms, who is?

This piece originally appeared in the May-June 2019 issue of Metro magazine.

Follow Metro on Twitter, Facebook, Instagram and sign up to our weekly email?